Why AI Is Forcing Us to Re-Examine Agile’s Quiet Assumptions

There is a pattern I have started to notice with methodologies we once treated as stable. They feel timeless, proven ways of navigating complexity, until something changes the environment around them. When that happens, the methods themselves do not immediately fail. Instead, they begin to reveal the assumptions they were built on, assumptions that had remained mostly invisible as long as the surrounding conditions held.

For more than two decades, I have worked with Agile practices. I hold Scrum certifications, and I have coached teams through sprints, retrospectives, and the steady discipline of delivering working increments. I still believe in its core promise: that small, empowered groups of people, working iteratively with feedback and transparency, can produce better outcomes than rigid, upfront planning.

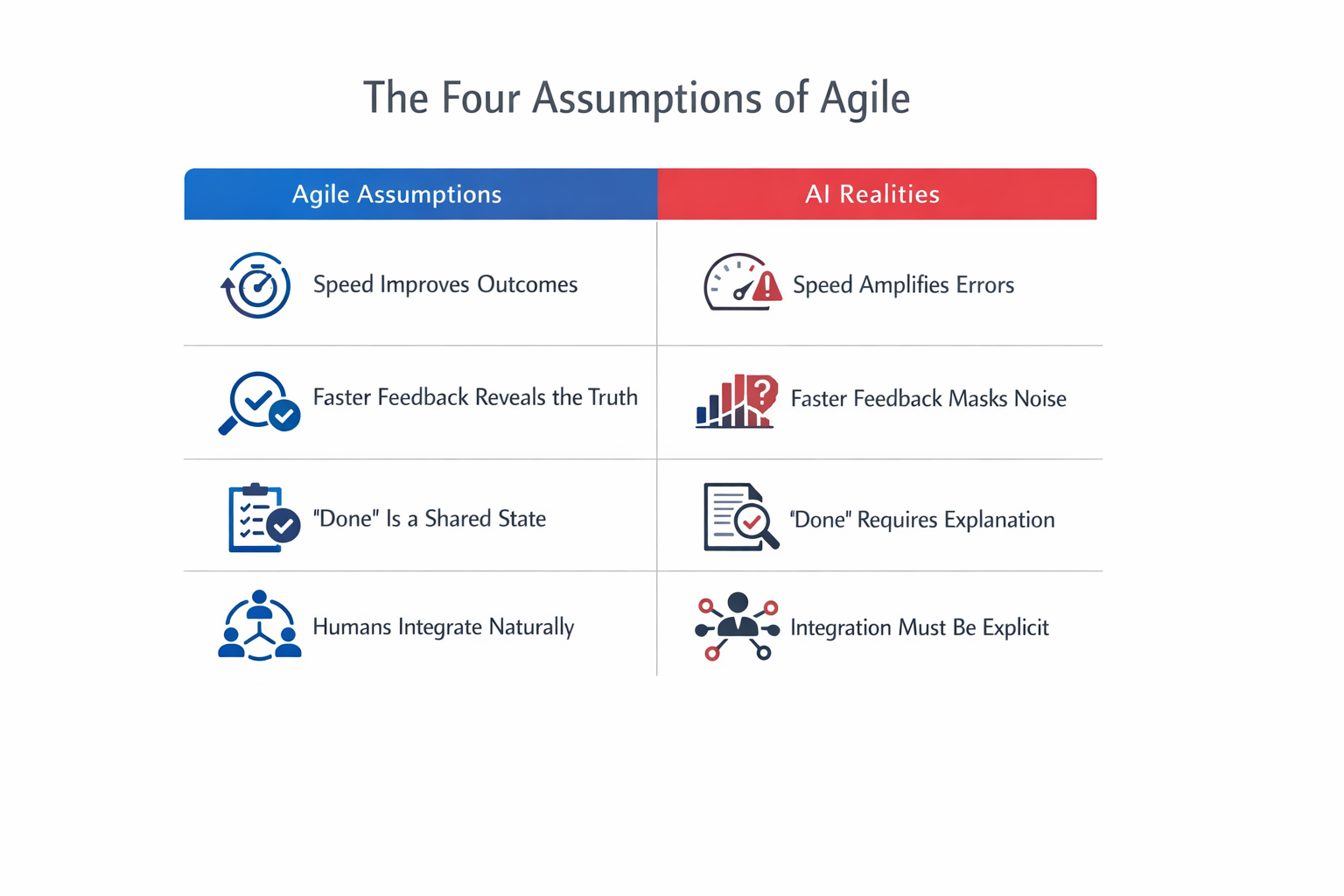

But Agile did not only introduce a set of practices. It carried with it a set of assumptions about how humans think, how they collaborate, and where judgment lives within a system. For most of its history, those assumptions were easy to overlook because they were largely true in practice. AI is not breaking Agile so much as making those assumptions visible, and forcing us to consider which of them still hold.

Agile Assumed That Speed Improves Outcomes

One of the quiet strengths of Agile has always been its ability to shorten feedback loops. What once took months could be done in weeks, and what took weeks could be done in days. The underlying belief was that faster iteration leads to better outcomes. AI compresses that cycle even further, sometimes into hours. A capable engineer working alongside modern coding assistants can generate, test, and refine features at a pace that would have seemed improbable not long ago.

The increase in speed is real, but it comes with consequences that are less immediately visible. When cycles become this short, the cost of imprecision rises sharply. Vague requirements, incomplete acceptance criteria, or loosely formed ideas are no longer moderated by the natural friction of human collaboration. Instead, they are amplified. AI does not question ambiguity the way a thoughtful teammate might, nor does it reconcile conflicting interpretations. It executes what it is given, quickly and with consistency.

In a traditional Agile environment, weaker practices could be absorbed, at least partially, by the slower rhythm of human work. With AI, those same weaknesses tend to compound. Teams can experience a genuine increase in velocity while quietly accumulating misalignment, technical debt, or flawed assumptions at a pace that is difficult to perceive in real time. The iteration is faster, but the reflection does not always keep up, which places strain on the next assumption Agile depends on.

Agile Assumed That Faster Feedback Reveals the Truth

Agile relies on empirical signals. We measure, inspect, and adapt, trusting that shorter loops will surface reality more quickly. This works best when the signals themselves are relatively clean and the system being observed behaves in a predictable manner.

In practice, feedback is rarely that straightforward. Metrics are often noisy or delayed, and different stakeholders interpret outcomes through their own priorities. Dependencies between components introduce ripple effects that are difficult to isolate. Even in well-functioning teams, what appears to be objective feedback is often shaped by context, incentives, and interpretation.

AI complicates this dynamic by accelerating not just iteration, but the production of results that appear plausible and complete. Automated outputs can look decisive while masking uncertainty beneath the surface. A local improvement may appear successful while introducing a broader inconsistency elsewhere in the system. What looks like signal can, at times, be an artifact of the model, the prompt, or the framing of the problem itself.

The assumption that faster iteration leads to clearer understanding begins to weaken under these conditions. Instead, it becomes possible to converge more quickly toward confidence without necessarily converging toward truth. When that happens, the question is no longer just how fast we can iterate, but how we determine that what we have produced is genuinely complete. Agile built a structure to answer that question. AI is beginning to outgrow it.

Agile Assumed That “Done” Is a Shared and Observable State

Agile’s Definition of Done was designed for human teams operating within a shared context. It functioned as a practical agreement about quality and completeness, ensuring that work was not only functional but also tested, reviewed, and ready to deliver. Those questions haven’t disappeared. But when part of the contribution is algorithmic, the DoD has to expand to cover a different class of concerns entirely.

Does the team understand how the AI arrived at its contribution, or only that it did? Can the decision be explained to a stakeholder, a reviewer, or an end user who needs to trust it? Is there an audit trail capturing not just what was built but what was prompted, what was generated, and what was accepted or modified? Were the outputs checked for systematic bias, edge case failures, or confident-sounding errors (hallucinations dressed as expertise)?

These are not exotic requirements. They are the new minimum. And they are not things an AI will flag for you. They require a human with both the authority and the discipline to ask them, which points toward a role that classic Agile largely assumed away: the integrator.

In a fully human team, integration happened organically through shared context and continuous interaction. No single person was explicitly responsible for holding the entire system together, because that coherence developed through collaboration. When part of the team is algorithmic, that assumption becomes less reliable. Someone must maintain a broader view, tracking not just whether individual pieces function correctly, but whether they align with each other and contribute to a coherent whole. This role is less about authority than about visibility: it belongs to the person who maintains enough distance from the immediate work to ask the systemic question when everyone else is moving fast. In most Agile teams, that capacity existed informally. With AI on the team, it needs to be deliberate. Which raises a further question: are the structures Agile gave us for coordination still capable of supporting that kind of deliberateness?

Agile Assumed That Coordination Is Human and Continuous

Agile’s ceremonies were designed for groups of people who share memory, develop intuition, and carry experience forward from one iteration to the next. Their value was never purely informational. They created shared awareness, surfaced unspoken concerns, and reinforced a sense of collective ownership. When a meaningful portion of the work is produced by AI, those dynamics begin to shift in ways that the ceremonies themselves were not designed to accommodate.

Consider the daily standup. Its value was never purely informational; it was relational. It surfaced blockers that people were reluctant to raise in other contexts, created lightweight accountability, and built shared situational awareness. When a significant portion of yesterday’s work was produced by an AI agent, the familiar questions become stranger. The human reports on what the AI did, and often cannot say with confidence what the AI will do today, because AI doesn’t plan ahead the way a teammate does. It responds to what it’s given, in the moment it is asked. The standup was built for people who carry yesterday forward. AI doesn’t carry anything forward.

Sprint planning is similarly strained. Estimation has always been partly an act of shared sensemaking, where the team negotiates complexity, surfaces hidden dependencies, and builds a common model of the work. When AI can draft, scaffold, or partially complete stories before planning even begins, the estimation conversation changes character. Is the team estimating human judgment work? Integration work? Validation work? The answer matters, but the planning ritual wasn’t built to make those distinctions cleanly.

Retrospectives may be where the strain is most interesting. The retro works because participants have genuine subjective experience of the sprint: what felt hard, what felt good, where trust eroded, where energy was high. AI has no such experience. It does not remember the last sprint. It did not find the ambiguous ticket frustrating. It will not bring unspoken tensions into the room. The retro becomes, in one sense, less complete. It remains a rich human conversation, but about a process that is now only partly human. Teams that treat it otherwise are missing something important about what actually happened and why.

None of this means the ceremonies should be abandoned. It means they need to be renegotiated rather than inherited. The question worth asking at the start of any sprint is not just “what are we building?” but “which parts of this process still assume a fully human team, and where do those assumptions no longer hold?” Asking that question seriously leads somewhere that can feel uncomfortable: back to what Agile was originally designed to solve.

What Agile Was Actually Solving

Agile was not originally developed because iteration is inherently superior as a way of working. It emerged as a practical response to the limitations of human cognition and coordination. Requirements change, estimates mislead, and the gap between intention and outcome is almost always larger than expected. Iteration was the honest response to that gap.

AI alters the nature of that gap. It is highly effective at executing well-specified instructions with speed and consistency, and in doing so it begins to reduce some of the uncertainty that Agile was designed to accommodate. Which raises a question that feels almost heretical to ask: are we applying Agile to AI-assisted development because it remains the right methodology, or simply because it is the one we already know? The ceremonies, the sprints, the incremental delivery may remain valuable, but perhaps for reasons that have quietly shifted. We may be running Agile for the human parts of the work, and calling the rest Agile because we don’t yet have a better name for it.

Not a Rejection, But a Re-Examination

That question, which assumptions still hold, is really what this entire piece is circling. Speed, empirical signals, the meaning of done, the design of the ceremonies themselves: each carries a quiet assumption about who, and what, is doing the work. AI hasn’t broken those assumptions so much as made them visible for the first time.

This is not an argument against Agile. If anything, it is an invitation to engage with it more honestly. Its core principles, human judgment, iterative learning, and responsiveness to change, remain as relevant as ever, perhaps more so now than when they were first articulated. What is changing is the environment in which those principles operate, and the assumptions that support the structures built around them.

It may be necessary to introduce more explicit forms of integration, to strengthen system-level visibility, and to redefine what it means for work to be complete. It may also require a willingness to introduce intentional friction, slowing certain forms of local optimization in order to preserve coherence at a broader level.

There are moments when a methodology stops being something we apply comfortably and begins to require that we evolve alongside it. This feels like one of those moments.

A note I recently wrote continues to come back to me: “The tool is faster than ever. Can our thinking keep pace?”

I would be genuinely interested in how others are navigating this. Where have your ceremonies started running on false assumptions? What does your Definition of Done look like now, compared to two years ago? The specific answers feel more important than any general methodology right now.

—